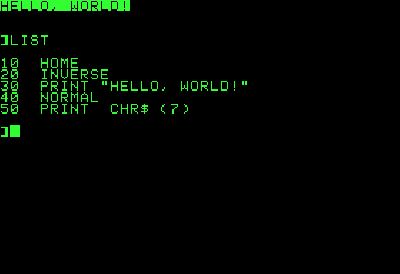

Simple Programming

End sub FreeBASIC is a self-hosting compiler which makes use of the GNU binutils programming tools as backends and can produce console, graphical/GUI executables, dynamic and static libraries. FreeBASIC fully supports the use of C libraries and has partial C++ library support. This lets programmers use and create libraries for C and many other languages. It supports a C style preprocessor, capable of multiline macros, conditional compiling and file inclusion. FreeBASIC has been rated close in speed with mainstream tools, such as GCC. More about FreeBASIC The FreeBASIC project is a set of cross-platform development tools, consisting of a compiler, GNU-based assembler, linker and archiver, and supporting runtime libraries, including a software-based graphics library. The compiler, fbc, currently supports building for i386-based architectures on the DOS, Linux, Windows and Xbox platforms.

C hello world program: C language program to print 'hello world', printf library function is used to display text on screen, ' n' places the cursor at the beginning. C program examples: These programs illustrate various programming elements, concepts such as using operators, loops, functions, single and double dimensional arrays, performing operations on strings, files, pointers etc. Browse the codes from simple C programs to complicated ones, every one of them is provided with the output.

The project also contains thin bindings (header files) to some popular 3rd party libraries such as the C runtime library, Allegro, SDL, OpenGL, GTK+, the Windows API and many others, as well as example programs for many of these libraries. FreeBASIC is a high-level programming language supporting procedural, object-orientated and meta-programming paradigms, with a syntax compatible to Microsoft QuickBASIC. In fact, the FreeBASIC project originally began as an attempt to create a code-compatible, free alternative to Microsoft QuickBASIC, but it has since grown into a powerful development tool. FreeBASIC can be seen to extend the capabilities of Microsoft QuickBASIC in a number of ways, supporting more data types, language constructs, programming styles, and modern platforms and APIs.

Any type of program can be written with FreeBASIC, see our for some notable examples.

Let’s face it, robots are cool. They’re also going to run the world someday, and hopefully at that time they will take pity on their poor soft fleshy creators (AKA ) and help us build a space utopia filled with plenty. I’m joking of course, but. In my ambition to have some small influence over the matter, I took a last year, which culminated in my building a simulator that allowed me to practice control theory on a simple mobile robot.

In this article, I’m going to describe the control scheme of my simulated robot, illustrate how it interacts with its environment and achieves its goals, and discuss some of the fundamental challenges of robotics programming that I encountered along the way. The Challenge of the Robot: Perception vs. Reality and the Fragility of Control The fundamental challenge of all robotics is this: It is impossible to ever know the true state of the environment. A robot can only guess the state of the real world based on measurements returned by its sensors. It can only attempt to change the state of the real world through the application of its control signals. A robot can only guess the state of the real world based on measurements returned by its sensors. Thus, one of the first steps in control design is to come up with an abstraction of the real world, known as a model, with which to interpret our sensor readings and make decisions.

As long as the real world behaves according to the assumptions of the model, we can make good guesses and exert control. As soon as the real world deviates from these assumptions, however, we will no longer be able to make good guesses, and control will be lost.

Often, control once lost can never be regained. (Unless some benevolent outside force restores it.) This is one of the key reasons that robotics programming is so difficult. We often see video of the latest research robot in the lab, performing fantastic feats of dexterity, navigation, or teamwork, and we are tempted to ask, “Why isn’t this used in the real world?” Well, next time you see such a video, take a look at how highly-controlled the lab environment is. In most cases, these robots are only able to perform these impressive tasks as long as the environmental conditions remain within the narrow confines of its internal model. Thus, a key to the advancement of robotics is the development of more complex, flexible, and robust models - advancement which is subject to the limits of the available computational resources. A key to the advancement of robotics is the development of more complex, flexible, and robust models.

[Side Note: Philosophers and psychologists alike would note that living creatures also suffer from dependence on their own internal perception of what their senses are telling them. Many advances in robotics come from observing living creatures, and seeing how they react to unexpected stimuli. Think about it. What is your internal model of the world? It is different from that of an ant, and that of a fish (hopefully). However, like the ant and the fish, it is likely to oversimplify some realities of the world. When your assumptions about the world are not correct, it can put you at risk of losing control of things.

Sometimes we call this “danger.” The same way our little robot struggles to survive against the unknown universe, so do we all. This is a powerful insight for roboticists.] The Robot Simulator The simulator I built is written in and very cleverly dubbed Sobot Rimulator. You can find v1.0.0. It does not have a lot of bells and whistles but it is built to do one thing very well: provide an accurate simulation of a robot and give an aspiring roboticist an interface for practicing control robot programming. While it is always better to have a real robot to play with, a good robot simulator is much more accessible, and is a great place to start. The software simulates a real life research robot called the.

In theory, the control logic can be loaded into a real Khepera III robot with minimal refactoring, and it will perform the same as the simulated robot. In other words, programming the simulated robot is analogous to programming the real robot. This is critical if the simulator is to be of any use. In this tutorial, I will be describing the robot control architecture that comes with v1.0.0 of Sobot Rimulator, and providing snippets from the source (with slight modifications for clarity).

However I encourage you to dive into the source and mess around. Likewise, please feel free to fork the project and improve it. The control logic of the robot is constrained to these files: • models/supervisor.py • models/supervisor_state_machine.py • the files in the models/controllers directory The Robot Every robot comes with different capabilities and control concerns. Let’s get familiar with our simulated robot. The first thing to note is that, in this guide, our robot will be an autonomous mobile robot. This means that it will move around in space freely, and that it will do so under its own control. This is in contrast to, say, an RC robot (which is not autonomous) or a factory robot arm (which is not mobile).

Our robot must figure out for itself how to achieve it’s goals and survive in its environment, which proves to be a surprisingly difficult challenge for a novice robotics programmer. Control Inputs - Sensors There are many different ways a robot may be equipped to monitor its environment. These can include anything from proximity sensors, light sensors, bumpers, cameras, and so forth. Bloodlust Metal Jdr Pdf Converter.

In addition, robots may communicate with external sensors that give it information the robot itself cannot directly observe. Our robot is equipped with 9 infrared proximity sensors arranged in a “skirt” in every direction. There are more sensors facing the front of the robot than the back, because it is usually more important for the robot to know what is in front of it than what is behind it. In addition to the proximity sensors, the robot has a pair of wheel tickers that track how many rotations each wheel has made. One full forward turn of a wheel counts off 2765 ticks. Turns in the opposite direction count backwards. Control Outputs - Mobility Some robots move around on legs.

Some roll like a ball. Some even slither like a snake.

Our robot is a differential drive robot, meaning that it rolls around on two wheels. When both wheels turn at the same speed, the robot moves in a straight line.

When the wheels move at different speeds, the robot turns. Thus, controlling movement of this robot comes down to properly controlling the rates at which each of these two wheels turn.

API In Sobot Rimulator, the separation between the robot “computer” and the (simulated) physical world is embodied by the file robot_supervisor_interface.py, which defines the entire API for interacting with the “real world” as such: • read_proximity_sensors() returns an array of 9 values in the sensors’ native format • read_wheel_encoders() returns an array of 2 values indicating total ticks since start • set_wheel_drive_rates( v_l, v_r ) takes two values, in radians-per-second The Goal Robots, like people, need purpose in life. The goal of programming this robot will be very simple: it will attempt to make its way to a predetermined goal point. The coordinates of the goal are programmed into the control software before the robot is activated.

However, to complicate matters, the environment of the robot may be strewn with obstacles. The robot MAY NOT collide with an obstacle on its way to the goal. Therefore, if the robot encounters an obstacle, it will have to find its way around so that it can continue on its way to the goal. A Simple Model First, our robot will have a very simple model. It will make many assumptions about the world. Some of the important ones include: • the terrain is always flat and even • obstacles are never round • the wheels never slip • nothing is ever going to push the robot around • the sensors never fail or give false readings • the wheels always turn when they are told to The Control Loop A robot is a dynamic system. The state of the robot, the readings of its sensors, and the effects of its control signals, are in constant flux.

Controlling the way events play out involves the following three steps: • Apply control signals. • Measure the results. • Generate new control signals calculated to bring us closer to our goal. These steps are repeated over and over until we have achieved our goal. The more times we can do this per second, the finer control we will have over the system. (The Sobot Rimulator robot repeats these steps 20 times per second, but many robots must do this thousands or millions of times per second in order to have adequate control.) In general, each time our robot takes measurements with its sensors, it uses these measurements to update its internal estimate of the state of the world.

It compares this state to a reference value of what it wants the state to be, and calculates the error between the desired state and the actual state. Once this information is known, generating new control signals can be reduced to a problem of minimizing the error. A Nifty Trick - Simplifying the Model To control the robot we want to program, we have to send a signal to the left wheel telling it how fast to turn, and a separate signal to the right wheel telling it how fast to turn. Let’s call these signals v L and v R. However, constantly thinking in terms of v L and v R is very cumbersome.

Instead of asking, “How fast do we want the left wheel to turn, and how fast do we want the right wheel to turn?” it is more natural to ask, “How fast do we want the robot to move forward, and how fast do we want it to turn, or change its heading?” Let’s call these parameters velocity v and angular velocity ω (a.k.a. It turns out we can base our entire model on v and ω instead of v L and v R, and only once we have determined how we want our programmed robot to move, mathematically transform these two values into v L and v R with which to control the robot. This is known as a unicycle model of control. Here is the code that implements the final transformation in supervisor.py. Note that if ω is 0, both wheels will turn at the same speed: # generate and send the correct commands to the robot def _send_robot_commands( self ). V_l, v_r = self._uni_to_diff( v, omega ) self.robot.set_wheel_drive_rates( v_l, v_r ) def _uni_to_diff( self, v, omega ): # v = translational velocity (m/s) # omega = angular velocity (rad/s) R = self.robot_wheel_radius L = self.robot_wheel_base_length v_l = ( (2.0 * v) - (omega*L) ) / (2.0 * R) v_r = ( (2.0 * v) + (omega*L) ) / (2.0 * R) return v_l, v_r Estimating State - Robot, Know Thyself Using its sensors, the robot must try estimate the state of the environment as well as its own state. These estimates will never be perfect, but they must be fairly good, because the robot will be basing all of its decisions on these estimations.

Using its proximity sensors and wheel tickers alone, it must try to guess the following: • the direction to obstacles • the distance from obstacles • the position of the robot • the heading of the robot The first two properties are determined by the proximity sensor readings, and are fairly straightforward. The API function read_proximity_sensors() returns an array of nine values, one for each sensor.

We know ahead of time that the seventh reading, for example, corresponds to the sensor that points 75 degrees to the right of the robot. Thus, if this value shows a reading corresponding to 0.1 meters distance, we know that there is an obstacle 0.1 meters away, 75 degrees to the left. If there is no obstacle, the sensor will return a reading of its maximum range of 0.2 meters. Thus, if we read 0.2 meters on sensor seven, we will assume that there is actually no obstacle in that direction. Because of the way the infrared sensors work (measuring infrared reflection), the numbers they return are are a non-linear transformation of the actual distance detected.

Thus, the code for determining the distance indicated must convert these readings into meters. This is done in supervisor.py as follows: # update the distances indicated by the proximity sensors def _update_proximity_sensor_distances( self ): self.proximity_sensor_distances = [ 0.02-( log(readval/3960.0) )/30.0 for readval in self.robot.read_proximity_sensors() ] Determining the position and heading of the robot (together, known as the pose in robotics programming), is somewhat more challenging. Our robot uses odometry to estimate its pose.

This is where the wheel tickers come in. By measuring how much each wheel has turned since the last iteration of the control loop, it is possible to get a good estimate of how the robot’s pose has changed - but only if the change is small. This is one reason it is important to iterate the control loop very frequently. If we waited too long to measure the wheel tickers, both wheels could have done quite a lot, and it will be impossible to estimate where we have ended up. Below is the full odometry function in supervisor.py that updates the robot pose estimation. Note that the robot’s pose is composed of the coordinates x and y, and the heading theta, which is measured in radians from the positive x-axis. Positive x is to the east and positive y is to the north.

Thus a heading of 0 indicates that the robot is facing directly east. The robot always assumes its initial pose is (0, 0), 0.

Related: Go-to-Goal Behavior The supreme purpose in our little robot’s existence in this programming tutorial is to get to the goal point. So how do we make the wheels turn to get it there?

Let’s start by simplifying our worldview a little and assume there are no obstacles in the way. This then becomes a simple task. If we go forward while facing the goal, we will get there. Thanks to our odometry, we know what our current coordinates and heading are. We also know what the coordinates of the goal are, because they were pre-programmed. Robotics often involves a great deal of plain old trial-and-error. I encourage you to play with the control variables in Sobot Rimulator and observe and attempt to interpret the results.

Changes to the following all have profound effects on the simulated robot’s behavior: • the error gain kP in each controller • the sensor gains used by the Avoid-Obstacles controller • the calculation of v as a function of ω in each controller • the obstacle standoff distance used by the Follow-Wall controller • the switching conditions used by supervisor_state_machine.py • pretty much anything else When Robots Fail We’ve done a lot of work to get to this point, and this robot seems pretty clever. Yet, if you run Sobot Rimulator through several randomized maps, it won’t be long before you find one that this robot can’t deal with. Sometimes it drives itself directly into tight corners and collides.

Sometimes it just oscillates back and forth endlessly on the wrong side of an obstacle. Occasionally it is legitimately imprisoned with no possible path to the goal. After all of our testing and tweaking, sometimes we must come to the conclusion that the model we are working with just isn’t up to the job, and we have to change the design or add functionality. In the robot universe, our little robot’s “brain” is on the simpler end of the spectrum. Many of the failure cases it encounters could be overcome by adding some more advanced AI to the mix.

More advanced robots make use of techniques such as mapping, to remember where it’s been and avoid trying the same things over and over, heuristics, to generate acceptable decisions when there is no perfect decision to be found, and, to more perfectly tune the various control parameters governing the robot’s behavior. Conclusion Robots are already doing so much for us, and they are only going to be doing more in the future. While robotics programming is a tough field of study requiring great patience, it is also a fascinating and immensely rewarding one. I hope you will consider in the shaping of things to come! Acknowledgement: I would like to thank and of the Georgia Institute of Technology for teaching me all this stuff, and for their enthusiasm for my work on Sobot Rimulator.

There are major portions of the code that are transferable to other robots. However, this code was designed for the Khepera III research robot, and therefore if the robot it runs on has major physical differences with the K3, this exact code will not control your robot correctly. You will need to make changes to reflect the physical attributes of your robot.

Download Magi Season 2 Ep 25 Sub Indo. For example, this control system is built for a robot with a certain wheel radius, wheel base, and sensor configuration. If these elements are only slightly different on your robot, you may be able to get away with making small changes to the source code. However, if your robot is significantly different, it will be necessary to redesign significant parts of the control system.

Robots require their software to guarantee that control commands are created rapidly and without delay, and that they do so using resources that are often much more limited than your average laptop. Failure to generate up-to-date, reliable control signals in time results in the robot losing control, and in the case of the most advanced robotics, this can be very dangerous, expensive, or even deadly. For this reason, the languages of choice for industrial and commercial robotics developers are those that offer the best performance, often at the cost of readability and maintainability. The clear winner in this category remains good old C, which is used in the majority of these applications. C++ is often used quite extensively. In some cases, specific applications use niche languages that are specifically well-suited to the task.

For example, I've heard that Ada (a language I am not familiar with) is used a lot for avionics. If you are passionate about robotics and want to program industrial robotics system, I recommend learning C. This will allow you to work on advanced robotics projects, and will make learning any other language you are likely to need later much easier. Hi Zainab Shihab. You need to find the documentation for the sensors in your robot, which should tell you how the readings correspond to actual distances.

For the Khepera III's infrared sensors, the reading R corresponds to the actual distance d as follows: R = 3960e^[ -30( d-0.02 ) ] Where d is in meters. This is sort of a bizarre equation but I believe it just represents the underlying physics involved in bouncing infrared light off of objects and detecting the amount that reflects back into the sensor.

Since the sensor is just a dumb component that does not contain a computer, it is our responsibility to convert the readings back into usable distances. In the case of Khepera III, we simply find the inverse of the above formula as follows: R = 3960e^[ -30( d-0.02 ) ] R/3960 = e^[ -30( d-0.02 ) ] -30( d-0.02 ) = ln( R/3960 ) d-0.02 = -(1/30)ln( R/3960 ) d = 0.02 - (1/30)ln( R/3960 ) The last line is the formula used in Sobot Rimulator.

So, if you can find the equation that relates reading values to actual distances for your sensors, you should be able invert that function in order to map those readings back into actual distance values. Hope that helps! Hi Norman, The obstacles are indeed created randomly. The code that does this can be found here. The algorithm generates a randomized set of RectangleObstacles, ensuring their properties are within reasonable ranges, and then adds them to the simulation world.

To simulate a sensor detecting an obstacle, the simulator tests the obstacle against a single directed line segment which projects outwards from the sensor. The test is a simple intersection test against the edges of the obstacle. The code for this test is here. It takes the line segment and the geometry of the obstacle being tested, and returns a few useful values to help determine the location of the obstacle. This method is in turn called from the physics engine, here. Finally, the real distance is converted into a sensor reading, which is done here.

These line segments are visualized in this screenshot. NOTE FOR READERS: The above refers to the physical simulation of the robot and its environment. The robot's control system, which is the subject of this article, is a separate entity. The goal of the simulation is to provide a realistic environment with which the robot's control system interacts.